The Future

Transmission

As electricity prices rise there is pressure to make our current systems more efficient.

Some commentators have suggested converting the whole grid to direct current, DC. This would decrease grid losses and extend the grid's practical geographic reach.

As it turns out my family was once heavily invested in DC systems (see The McKie Family - Untimely Death), leading to events without which I would not have been born.

As advocates point out most of today's electronic devices could easily be converted to run on DC. But most current generation motorised appliances could not and would require an inverter.

All the transmission and distribution transformers, of which there are hundreds of thousands, would need to be replaced by much more complex electronic equivalents that, nevertheless, may be less costly; when mass produced.

Although the conversion would be daunting it would be a way of saving some of the energy presently lost to simply heating the environment.

It would also reduce or eliminate the constant low frequency radiation that now surrounds us: the buzzing noise you hear if you touch your finger to an open microphone connection.

This could be a dubious benefit as there is no evidence that this radiation is harmful. It is even possible that this actually protecting us from more dangerous natural radiation; and is a factor in people living longer today than in the past.

In the meantime the use of DC links within the Eastern Australian grid is now relatively mature. In 2006 the first Basslink undersea HVDC interconnector came into service: running at 400kV; and rated to transmit up to 500 megawatts (MW) in either direction. At 290 km the undersea cable component was then the second longest of its type in the world.

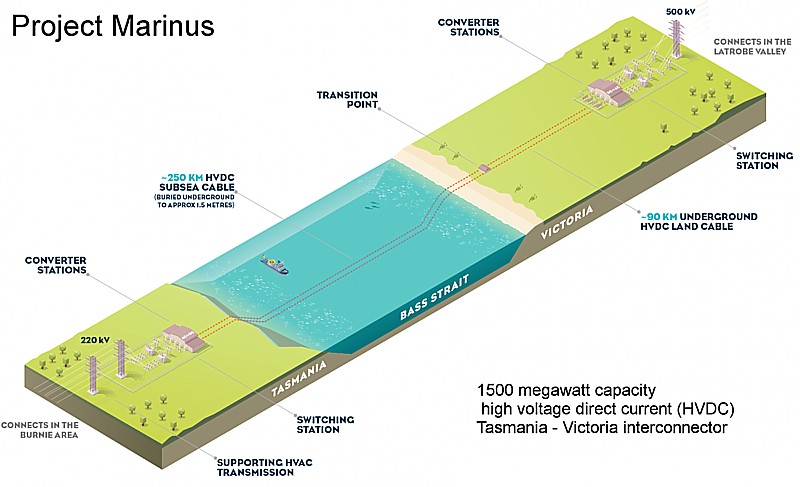

Given its success the $3.5bn 'Marinus Link Project' is now underway (planned to come on-line in the late 2020's), incorporating an additional 1500 MW high voltage direct current (HVDC) undersea interconnector, in order to exploit Tasmania's excellent wind resources.

Similar technology could be used on other very long interlinks but at present the economics still favour AC links in part because, with AC at both ends, and multiple links, grid synchronisation is better facilitated. Thus the Haywood interlink, between Victoria and South Australia, is 275 kV AC and maintains synchronisation between the states.

The failure of the Hayward link in 2020 provides a good example of the challenges presented by misaligned electricity demand and supply peaks - in that case, due to a very large fall in wind generation.

South Australia separates from NEM, again, as interconnector troubles returnUpdate – As of 8:05pm, the Australian Energy Market Operator has confirmed that South Australia has been re-synchronised with the rest of the National Electricity Market and power flow has been restored via the Heywood terminal. Michael Mazengarb in Renew Economy - 2 March 2020 |

.

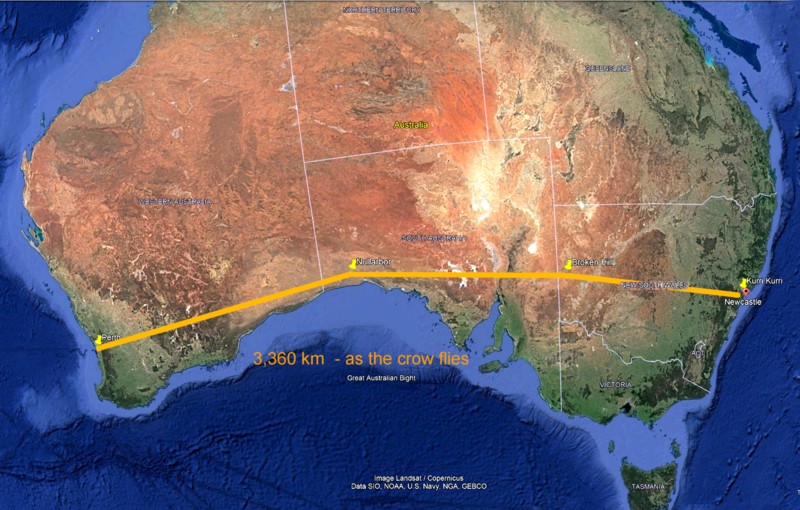

Some blue-sky enthusiasts suggest that a DC grid will form the future electricity transport backbone; for example: to span the 4,000 kilometres from Sydney to Perth.

This would pose significant challenges including crossing two mountain ranges and a lot of desert. Losses would be prohibitive unless the voltage used was very high indeed, in the millions of volts, requiring very high transmission towers or exceptional insulation and cable cooling.

An alternative could be a superconductive interlink.

The electrical conductivity of most metals (and other conductors) changes according to temperature.

Except in some exotic materials, they become more conductive as temperature falls.

Some metals like niobium-titanium or niobium-tin alloys become superconductors at very low temperatures, just above absolute zero. That means that electrical currents are transmitted freely with no resistive losses; and consequently, no electrical heating.

The Large Hadron Collider (LHC) has miles of superconducting magnetic coils. Many magnetic resonance imaging (MRI) machines in hospitals employ similar coils.

To make such a super conducting magnet the wire needs to be bathed in liquid helium. This reduces its temperature to just four degrees above absolute zero. The boiled-off helium gas is then recovered and re-compressed to liquid helium in extremely sophisticated cryogenic apparatus. Not only is this apparatus very expensive but it consumes energy to remove heat leaking into the system.

According to the CERN website - the LHC's cryogenic system employs 120 tonnes of helium to keep the superconductors at 1.9 K (−271 °C, −456 °F). Running the cryogenic system including: circulating the liquid; recompressing the boiled off helium gas; and disposing of the heat continuously consumes 40 MW of electricity.

If this cooling system fails, at any point in the conduction path, the material ceases to super-conduct. The very high currents in use instantaneously vaporise the metal at that point, often causing an explosion and/or very serious equipment damage. This happened catastrophically in one of the first runs of the LHC, shutting it down for nearly a year.

This risk, together with the financial and energy cost of supercooling present generation superconductors, if they were stretched out in hot environments over hundreds or thousands of kilometres, makes them impractical at present for commercial electricity transmission.

But research continues into high temperature superconductors. These already exist in the laboratory but seem to collapse under high magnetic fields; making the present generation useless for high power applications. Their operation involves quantum mechanics and is still somewhat mysterious. This is one area in which a better understanding of quantum mechanics, in part through work at the LHC, might change the future.

Generation

Assuming anthropogenic climate change has reached criticality, principally due to overpopulation, the World needs to urgently reduce our consumption of fossil fuels. See: Climate Change - a Myth? on this website.

At the moment our only significant economic alternatives to fossil fuels, presently required to provide energy when the wind is not blowing and the sun is not shining, are hydroelectricity and nuclear (fission) power. Recent studies show that 'fracking' for gas and even burning wood are potentially worse for the environment than burning coal.

Uranium is presently the principal nuclear fuel but as the very heavy elements, like uranium and gold, are created in comparatively rare neutron star mergers, these elements were also relatively rare in the dust that coalesced to become the Earth, so accessible resources are limited, maybe to a millennium worth of fuel. Fortunately to those recourses we can add thorium that can be 'bred' as a potentially safer nuclear fuel.

So with wind and solar generation meeting up to half the demand, the World could wean itself off burning coal and gas for electricity within a few decades; and still have sufficient energy to reduce dependence on petroleum and sustain a population of ten or eleven billion with more equity in health and material wellbeing, next century.

After uranium and thorium, we have an almost limitless source of energy - nuclear fusion.

The Sun is powered by fusion but on Earth the sun's energy fluctuates too much to gather its energy easily; due to the Earth’s rotation; and the tilt of its axis that gives us summer and winter. It also gives us tides and generates the weather, that gives us wind energy, but all these factors get in the way of the radiant energy reaching our solar panels on a consistent basis.

Some commentators have suggested that solar collectors in orbit would provide a 24/7 energy supply if we could get it down to the surface. We might do this with lasers or microwaves. In either case there would be very significant dangers and environmental considerations to be addressed. Either method would make a very nice James Bond, or even Star Wars, style death ray.

But we have already harnessed this source of energy on Earth energy many hundreds of times. It is the principal source of energy in the hydrogen bomb. Unfortunately, we don't want all that energy at once.

Scientists have recently succeeded in creating controlled fusion on a small scale in the laboratory. But this is far too small and costly to be of commercial use.

Somewhere in between would be good.

At the moment there is no practical commercial reactor in sight; principally because conventional nuclear fission reactors, using uranium (and other unstable heavy elements) are a lot less complex and therefore less costly.

But work continues. A complexity or cost breakthrough in nuclear fusion would solve our energy needs as a central issue facing mankind; particularly if we are also successful in reducing world population to sustainable levels in the future.

The ultimate Science Fiction (or Pons and Fleischmann/ Back to the Future) dream is that particle physics may someday reveal a reversible way to store energy as mass and then recover it as energy when wanted; cheaply with little or no loss. Batteries already do this on an infinitesimal scale but a gram or so of mass is presently out of reach.

This is like the alchemists dream of turning base metals into gold.

It would be far more valuable than that. The entire energy transport network; the grid; gas; and petrochemicals; would become redundant.